Nature of Code - Week 5

14 Apr 2017

courses, documentation, noc

Wikipediae is an alternate version of Wikipedia generated by a recursive neural network (RNN) algorithm trained on a data dump of Wikipedia.

The goal of the project is to host a series of linked webpages that are aesthetically similar to Wikipedia, but the text is entirely generated. I am experimenting with RNN libraries to train on a data dump of Wikipedia, and to find one that create outputs most similar to the training corpus. Meaning, the output will be in Markdown, with articles that link to each other containing text that follow the typical format of a Wikipedia articles. Ideally, the output will contain an entire alternate universe, with its own countries and geography, political systems, celebrities and cultural events, etc.

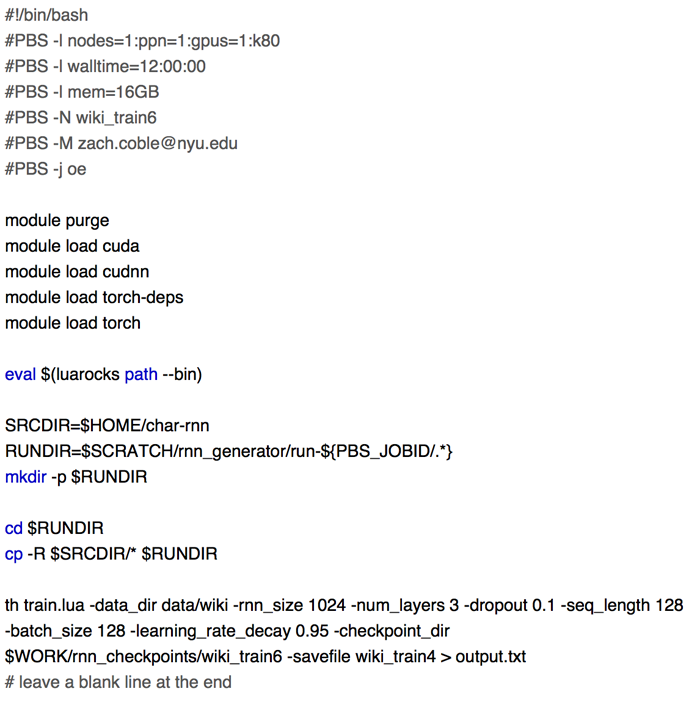

I’m currently experimenting with the char-rnn library, and using NYU’s HPC environment for training. I would also like to try torch-rnn and Tensorflow. Here’s an example of a script using char-rnn:

And here’s an example of the sampling output:

- Email: coblezc@gmail.com

Twitter: @coblezc - CC-BY